The Hidden Tax of Multi-Agent Systems: Why a 5-Agent Setup Costs 15x More to Run Than 1

There's a Reddit thread on r/AI_Agents this week that asks the exact question every SMB owner asks the moment they hear "agent team": is multi-agent architecture worth the 15x token cost? The thread has 21 comments and almost no consensus. Most of the answers are some version of "depends on the use case," which is technically true and operationally useless.

Here's the version that's actually useful: most SMBs running a 5-agent setup right now are paying the 15x tax and getting roughly 1.5x the output. That's not a multi-agent problem. That's a problem with how the system was designed. The math is fixable. You just have to know where the multiplier comes from.

Where the 15x actually comes from

A single AI employee doing one role end-to-end has a token budget that looks roughly like this for a typical task:

- The user prompt or incoming message

- The agent's system prompt (role description, tools, constraints)

- The accumulated context (prior conversation, memory)

- Tool calls and their results, looped through until the task is done

- The final response

Call that 1x.

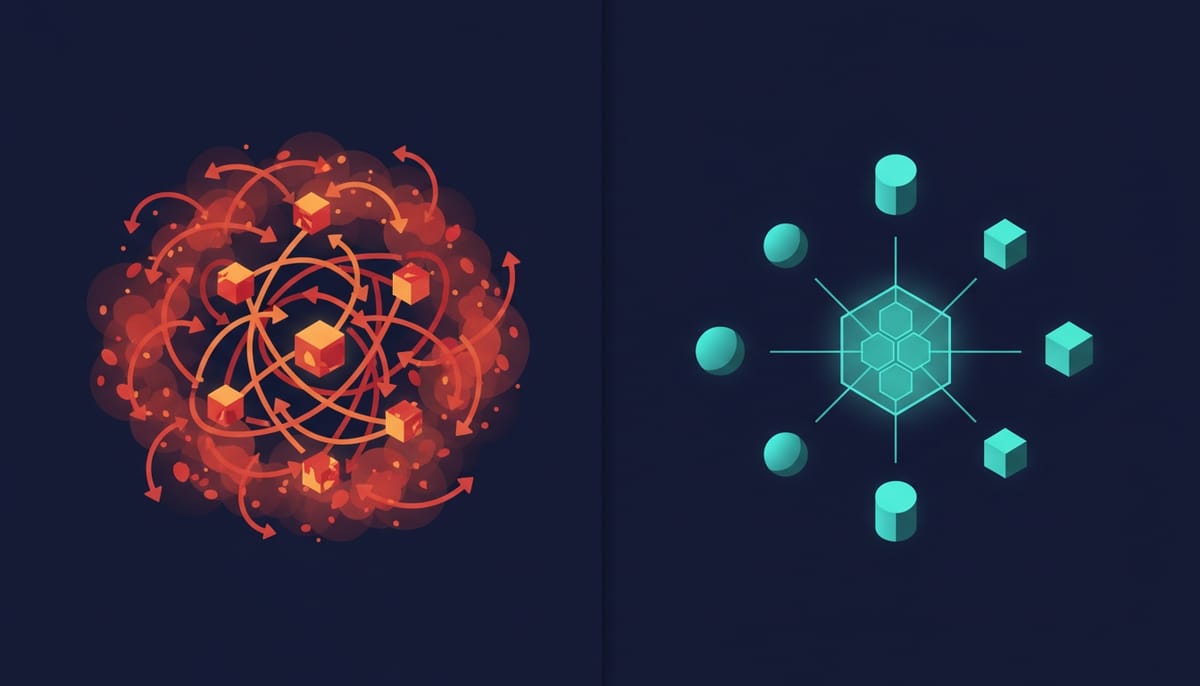

A 5-agent setup running the same task has those same components, multiplied by five — but it's worse than 5x, because of three categories of overhead that don't exist in the single-agent case.

Coordination overhead. When agents talk to each other, every hand-off includes context the receiving agent needs to act on. That's pasted forward, often more than once. A supervisor that delegates to three workers and then asks for a status check has potentially read the same context block four times. We've seen real production traces where 40% of total tokens were redundant context being passed between agents.

Reflection overhead. Each agent in a multi-agent system typically reflects on its own output before passing it forward — "did I actually answer the question?" — and then the receiving agent reflects on what it received before acting. Two reflections per hand-off, and you can have several hand-offs in one task. Each reflection is a full prompt cycle.

Routing overhead. A supervisor agent that decides "which worker should handle this?" runs a model call to make the routing decision. Sometimes a routing decision triggers a second routing decision because the first agent realized it was the wrong choice. We've seen routing chains five layers deep on simple tasks because the supervisor was over-cautious.

Stack those three and you get to 10-20x token consumption for the same task. The Reddit poster's 15x is well within range.

When the 15x is worth it

You pay the multi-agent tax when the workload is genuinely parallel. Examples that pencil out in production:

- A research task that needs to query 8 different sources, evaluate them independently, and synthesize. Eight workers in parallel finishing in the time of one is real value.

- A document review that needs five specialist passes (legal, finance, brand, accessibility, fact-check). Five reviewers running concurrently beats one reviewer doing five passes.

- A customer onboarding that triggers parallel side effects (CRM update, billing setup, calendar invite, welcome email, internal Slack ping). Five small agents owning their own thing beats one agent serializing.

The pattern is the same: the work was already parallel, and the multi-agent architecture just stops the human (or the single-agent) from being the serialization bottleneck. You're paying 15x in tokens to save the wall-clock time of running things in series.

When it's not worth it

You're paying the tax for nothing if the workload is sequential dressed up as multi-agent. The most common culprits we see in SMB deployments:

- A "5-agent customer support flow" where the agent that classifies the ticket, the agent that drafts the reply, the agent that picks the tone, and the agent that approves the send are all serialized. That's a 1-agent task with extra steps. The hand-off overhead is pure waste.

- A "multi-agent content pipeline" where one agent researches, another writes, another edits, another formats. Same problem. The work doesn't parallelize. You're paying for ceremony.

- A "multi-agent CRM update" that has a master agent calling a worker to read the record, then another to compute the change, then another to write it back. This is a function call. You bought a coordination layer to do what one tool call does.

The diagnostic question: if you forced this exact workload into a single agent with the right tools, would it complete in roughly the same wall-clock time? If yes, your multi-agent setup is performing 1x of work for 15x of cost.

The SMB version of the right answer

For an SMB running 5 virtual employees today, the architecture that's actually working in production isn't 5 agents talking to each other. It's 5 agents each owning one role, working independently, with a thin shared coordination layer for the rare moments they need to hand off.

Specifically:

- Per-employee scoped containers. Each agent has its own memory store, its own credentials, its own task queue. They don't share context unless explicitly required.

- Structured hand-offs over chat. When the sales agent qualifies a lead and the EA agent needs to book the meeting, the sales agent writes a structured payload to a shared task store. The EA agent reads it on a poll cycle. No free-text chat between agents.

- Routing only at the edges. A user-facing front-door router decides which agent gets which incoming request. Once the request is in the right agent's queue, no further routing happens. The agent runs end-to-end.

- Reflection on demand, not by default. Agents don't reflect on their own output unless a quality gate fires. Most of the time the model is right the first time and the second pass is wasted tokens.

That architecture costs about 1.5-2x a single-agent setup for 5 agents handling 5 roles. That's the multiplier you should be paying. If your bill is 15x, you have a coordination problem, not an agent problem.

What this means for your AI budget in 2026

The takeaway isn't "don't do multi-agent." It's: stop letting your vendor or your framework convince you that more agents talking to each other is the answer. The multi-agent architectures that ship in production are the ones where the agents barely talk at all — they each own a clear role, they read from and write to a shared structured store, and they stay out of each other's context windows.

If you're an SMB founder evaluating an AI platform and the demo shows agents chatting with each other in plain English to coordinate work, look at the token meter, then the wall-clock improvement, then ask the vendor what their architecture would do for your use case if you ran a single agent end-to-end. If the answer is "the same thing in less time and one tenth the cost," you just did the right diagnostic.

Want to test the most advanced AI employees? Try it here: https://Geta.Team