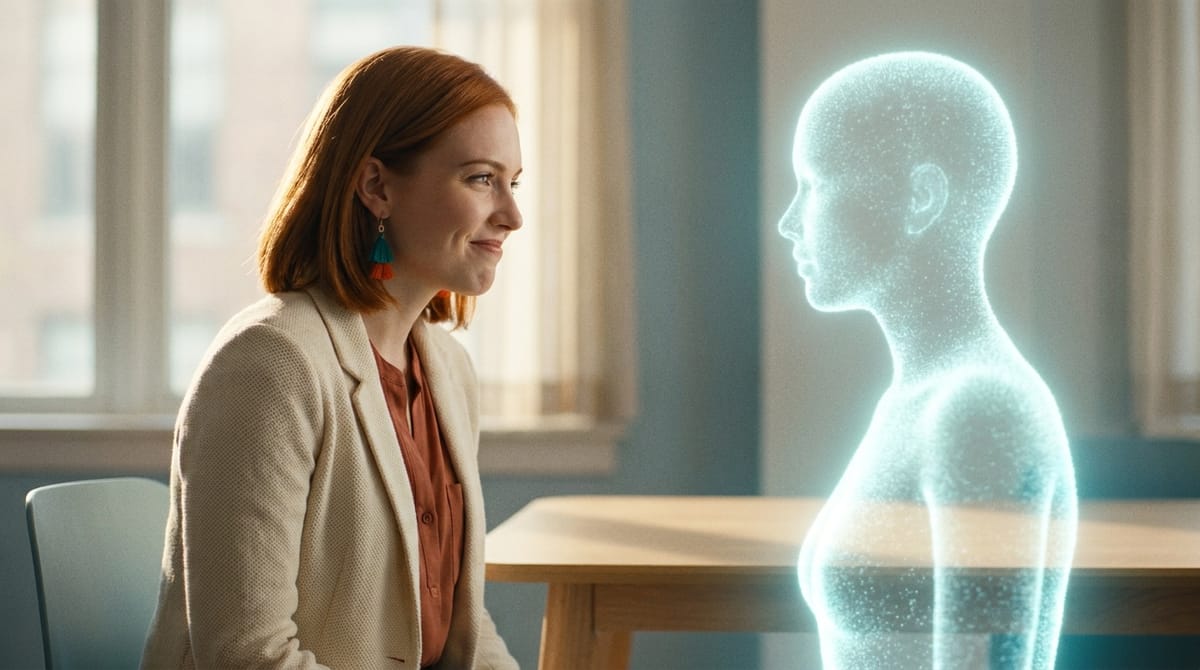

The Real Bottleneck for SMB AI Adoption Isn't Cost or Complexity. It's Trust Calibration.

When an SMB owner tells us they're not ready for AI yet, they almost always frame it as "too expensive" or "we'd need someone technical to set it up." Both are easy answers and neither is the real one. The pricing has dropped to the point where a virtual employee costs less than a part-time intern, and setup is genuinely a 5-minute conversation. The thing they're actually saying — usually without realizing it — is that they don't know how much to trust the AI today, and they don't have a way to find out without risking something they care about.

That's a trust calibration problem, not a cost or complexity one. And it's the single biggest reason SMB AI deployments stall at week three.

What "trust calibration" actually means

Trust between a human and an AI employee isn't a switch. It's a dial, and the dial has to move based on evidence. You give the AI a small thing. It does it well. You give it a slightly bigger thing. It does that well too. Three months later you've handed it real responsibility and the relationship works because trust accumulated through repeated, observable competence. Same as hiring a person.

What kills SMB deployments is that most platforms don't expose this dial. They give you two settings: "approve every action before it executes" or "let it run autonomously." The first is so high-friction that the owner stops using it within a week. The second is so high-stakes that nobody sane turns it on. The middle — where 95% of useful work actually happens — is undefined.

So the SMB owner sits at week three with an AI that is either annoying them constantly with approval prompts, or sitting idle because they're scared to flip it to autonomous. They cancel the trial. They report back to their network that "AI isn't ready yet." It's not the AI. It's the calibration interface.

The four-stage trust ladder

The deployments that survive past month two have, intentionally or not, reproduced something like a four-stage ladder. Worth naming the stages because once you see them, you can design for them.

Stage 1 — Drafts only. The AI suggests, you do everything. She drafts the email, you read it, you send it from your inbox. She prepares the meeting brief, you skim it, you walk into the meeting yourself. The risk surface is essentially zero because nothing happens without you pressing the button. This is where every relationship starts.

Stage 2 — Action with mandatory pre-review. The AI is allowed to take the action, but only after you approve. She drafts the reply and sends it from her own email after you click approve. She books the meeting and puts it on your calendar after you nod. This stage feels small but it's actually the biggest unlock — the AI is now in the loop of doing, not just suggesting, and the audit trail starts to mean something.

Stage 3 — Action with notification. The AI takes the action and tells you afterward. The reply went out, here's what she said. The meeting got booked, here's the time. You can roll back if something's wrong, but you're no longer the bottleneck. This is the stage where SMB productivity actually compounds, because the AI is no longer waiting on your inbox.

Stage 4 — Autonomous. The AI just does it. You see it in the weekly report, not in real time. This is the stage that gets all the press but is genuinely rare in practice — usually reserved for narrow, well-understood, low-stakes loops (book the recurring meeting, send the standard onboarding email, post the standard end-of-week update).

The point isn't that everyone should aim for Stage 4. The point is that healthy deployments live mostly at Stage 2 and 3, with different actions sitting at different stages depending on consequence. "Drafting reply to a customer complaint" might be at Stage 1 forever. "Confirming the recurring weekly invoice payment" might hit Stage 4 by week six. Both are correct.

Why platforms collapse this to binary

Most AI products today expose the trust dial as a single setting in the admin panel: "Allow AI to take actions: yes/no." Sometimes there's a second level for high-risk actions like payments. That's it.

The reason is design effort, not technical limitation. Building the four-stage model means you need per-action permissions, per-skill trust scoring, an approval queue UI that doesn't feel like a chore, an audit log the user actually reads, and a rollback mechanism that works even after the action executed. That's a lot of surface area for something most product teams treat as a single checkbox.

But it's the surface area that determines whether SMB deployments stick. Without it, the user has no way to grow the relationship. With it, they have a path.

What this implies for SMB product design

A few things follow if you take the trust ladder seriously.

You stop asking the user "do you trust the AI?" at signup, because the question is meaningless before they've worked with her. You ask it per-action, per-skill, after she's demonstrated something. The answer is allowed to be different for "drafts emails to leads" (high trust by week two) versus "sends invoices over $5k" (Stage 1 forever).

You design the approval queue to feel like reviewing a teammate's work, not auditing a machine. Show the AI's reasoning in plain language. Show what she'll touch and what she won't. Show a one-click "approve and remember this kind of decision" that quietly promotes the action up the ladder for next time.

You make rollback a first-class feature, not a hidden one. The reason Stage 3 works at all is that the user knows they can undo something the AI did. Hide the undo and they refuse to leave Stage 1.

You make the trust state visible. The user should see, at a glance, "Lyla is currently allowed to send drafts to me, send replies after my approval, and book meetings without asking. She is not allowed to send invoices, post publicly, or contact new prospects." That's not a setting screen. That's the relationship made legible.

The real opportunity

The SMB AI market doesn't get unlocked by cheaper models or better demos. It gets unlocked by the first platforms that stop treating trust as a checkbox and start treating it as the central UX problem. The deployments that survive aren't the ones with the most autonomy. They're the ones where the human can climb the trust ladder one rung at a time, with confidence, and watch the relationship grow.

That's not an AI capability question. It's a product design question. And the platforms that get it right are about to eat the SMB market.

Want to test the most advanced AI employees? Try it here: https://Geta.Team